Gabriel Peyré on Twitter: "The soft-max is the gradient of the log-sum-exp. Central to preform classification using logistic loss. Needs to be stabilised using the log-sum-exp trick. Also at the heart of

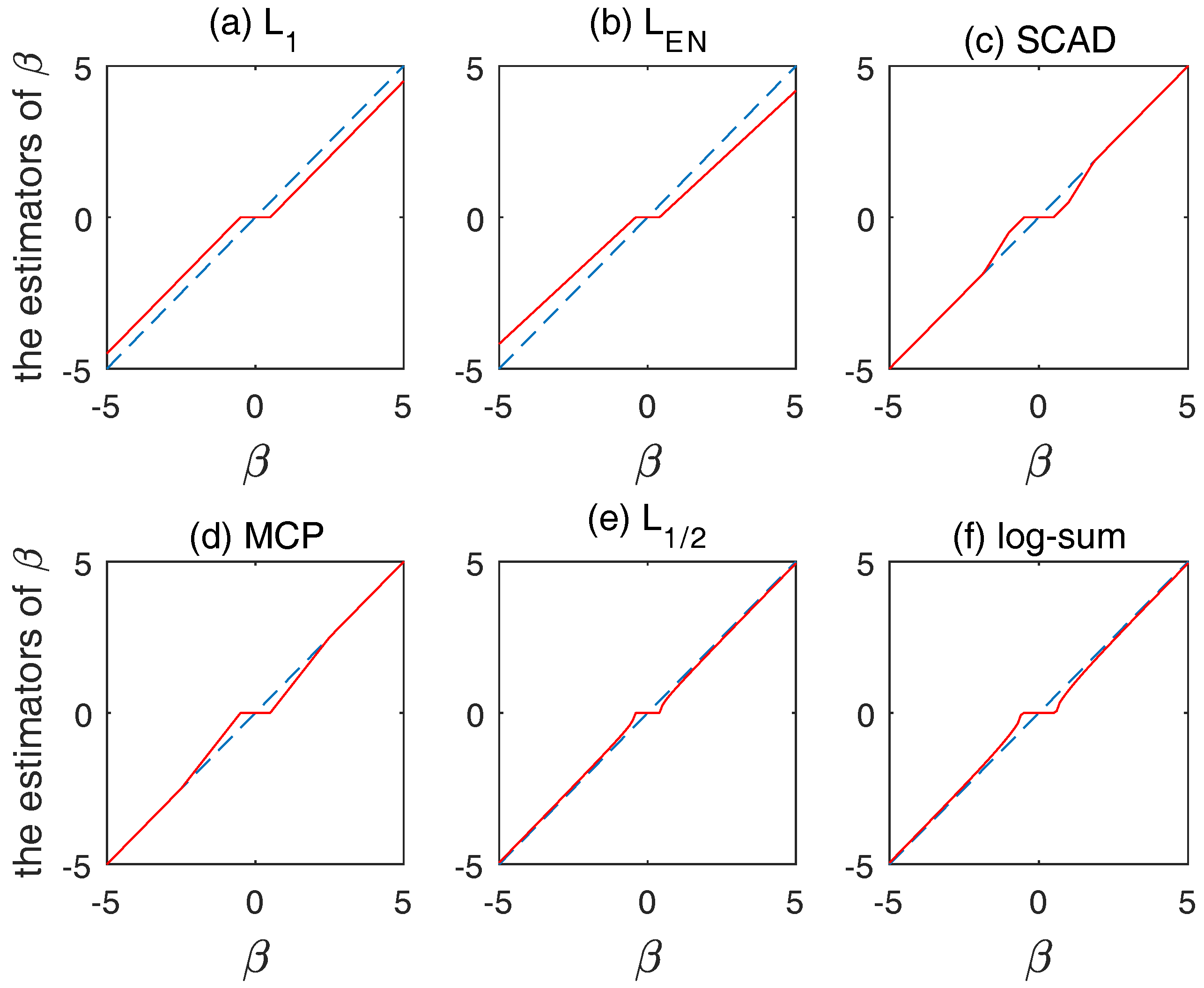

IJMS | Free Full-Text | Descriptor Selection via Log-Sum Regularization for the Biological Activities of Chemical Structure

Find the sum of n terms of the series `log a + log (a^2/b) + log (a^3/b^2) + log(a^4/b^3)...` - YouTube

Underflow/overflow from improper log, then sum, then exp · Issue #5 · lanl-ansi/inverse_ising · GitHub